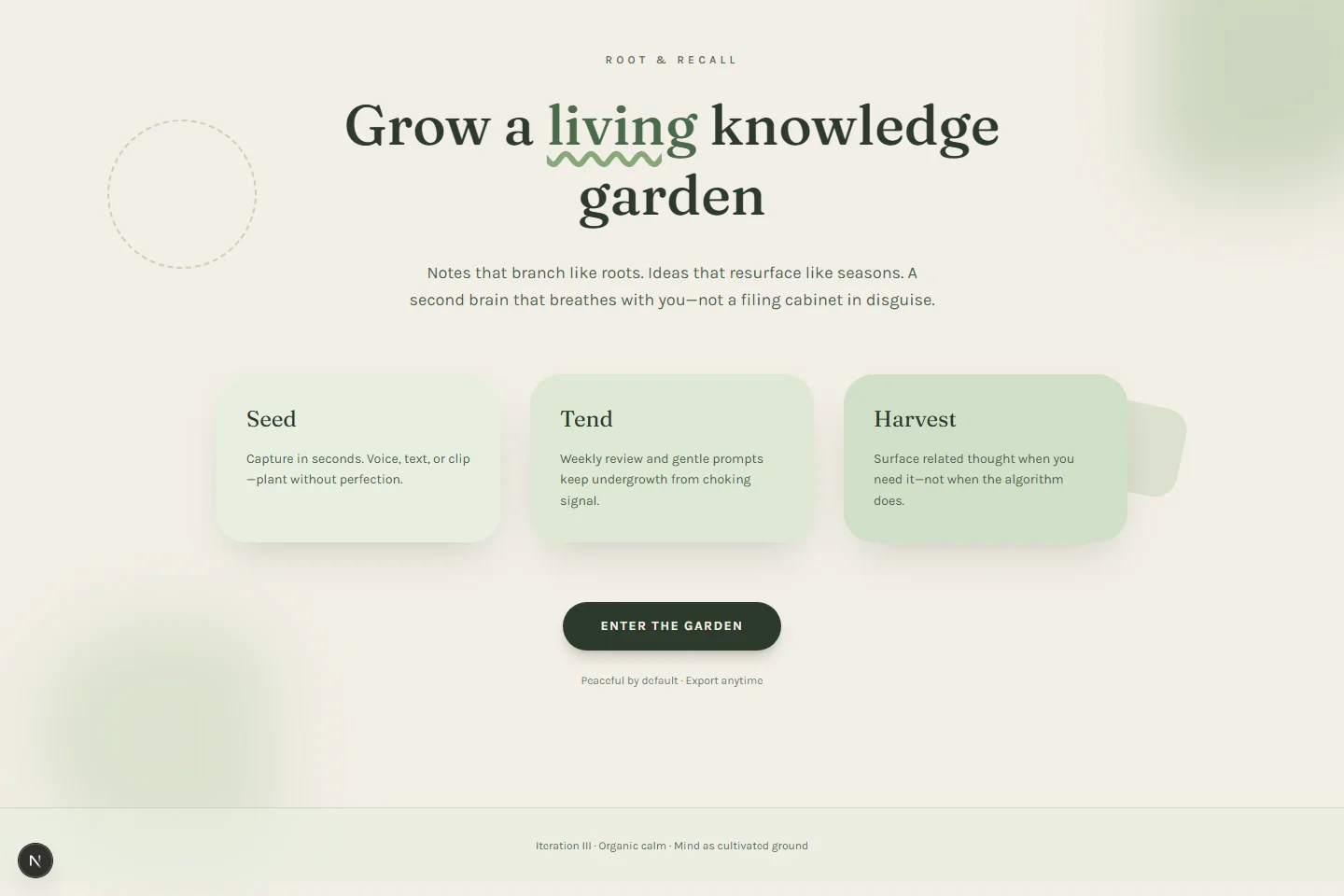

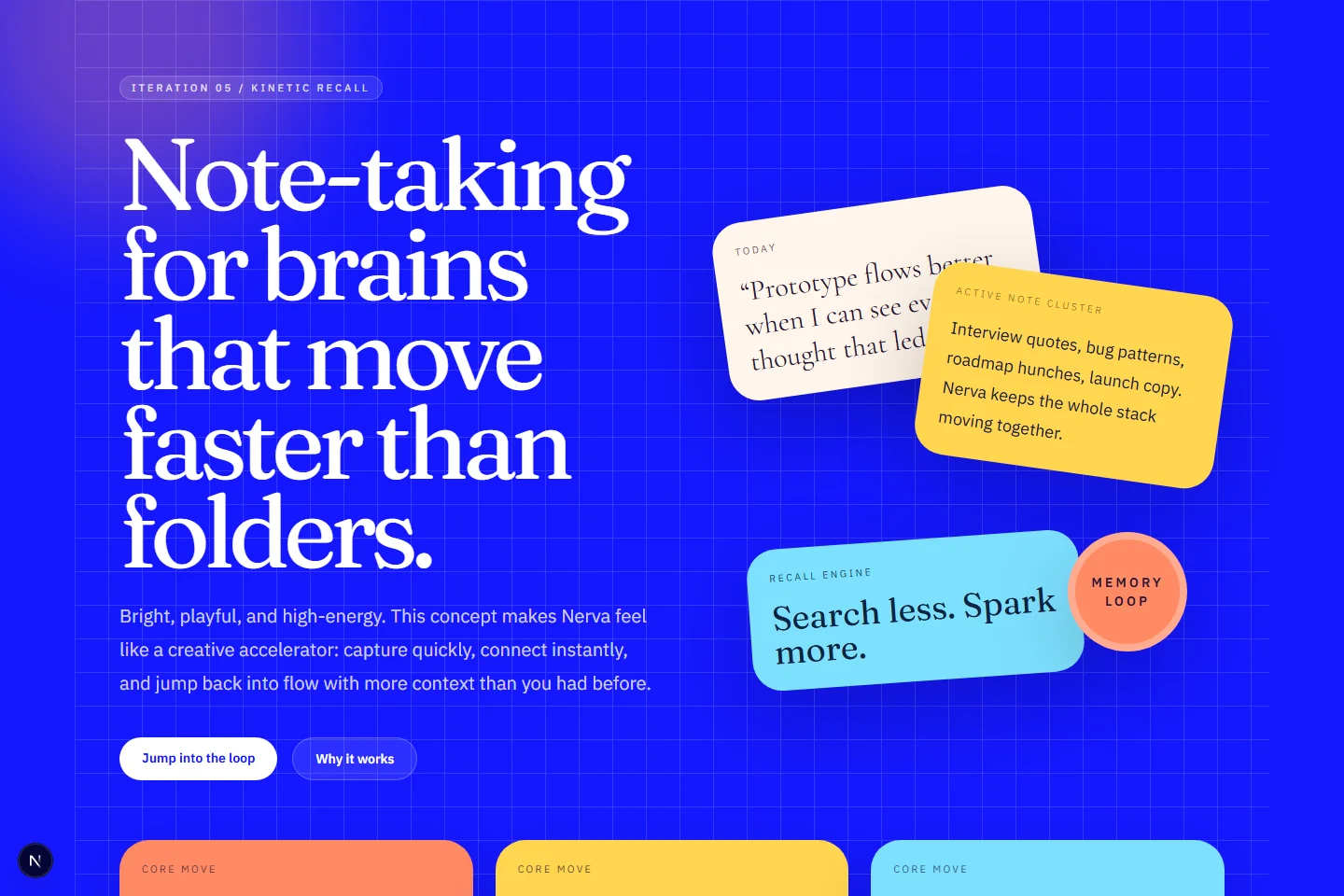

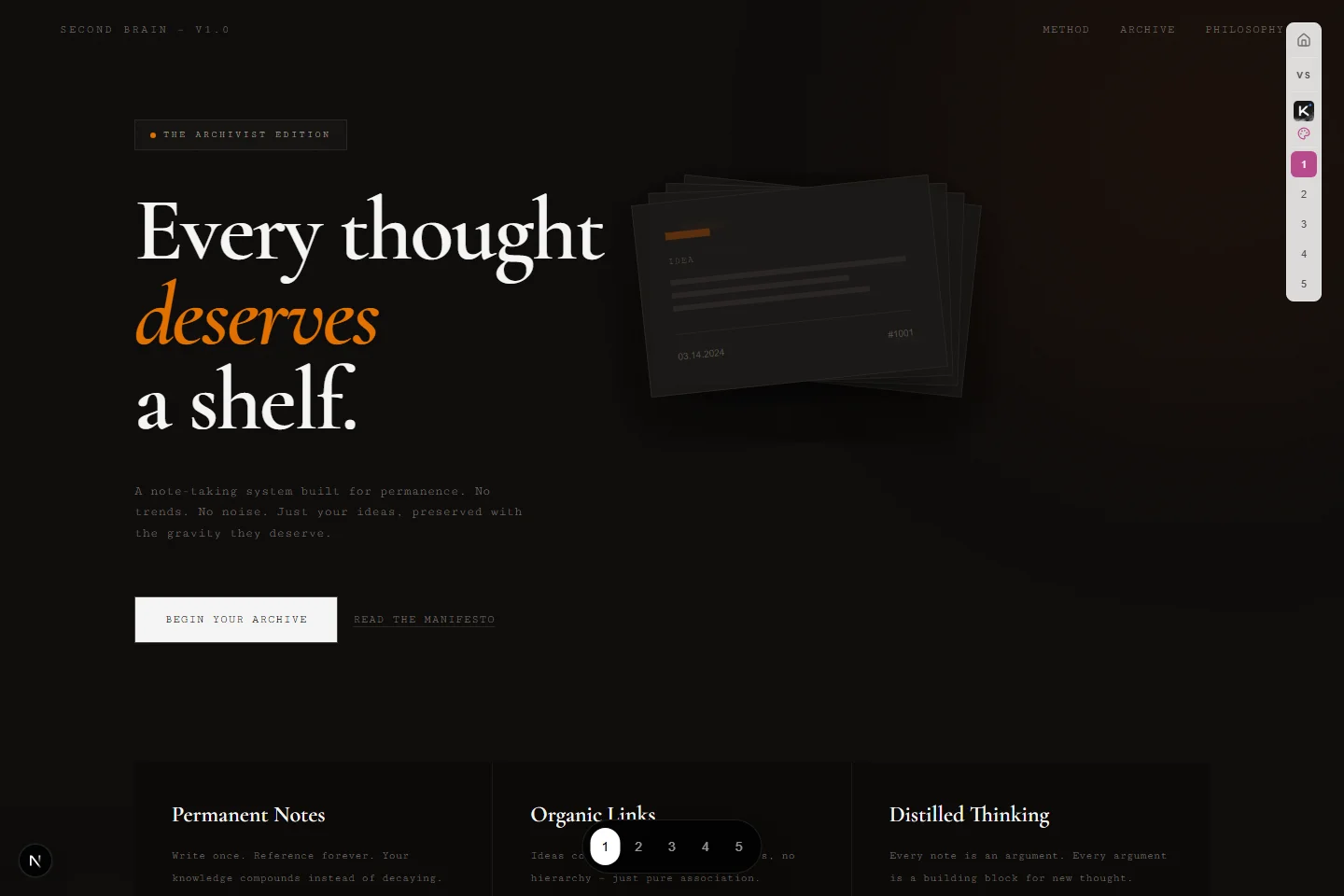

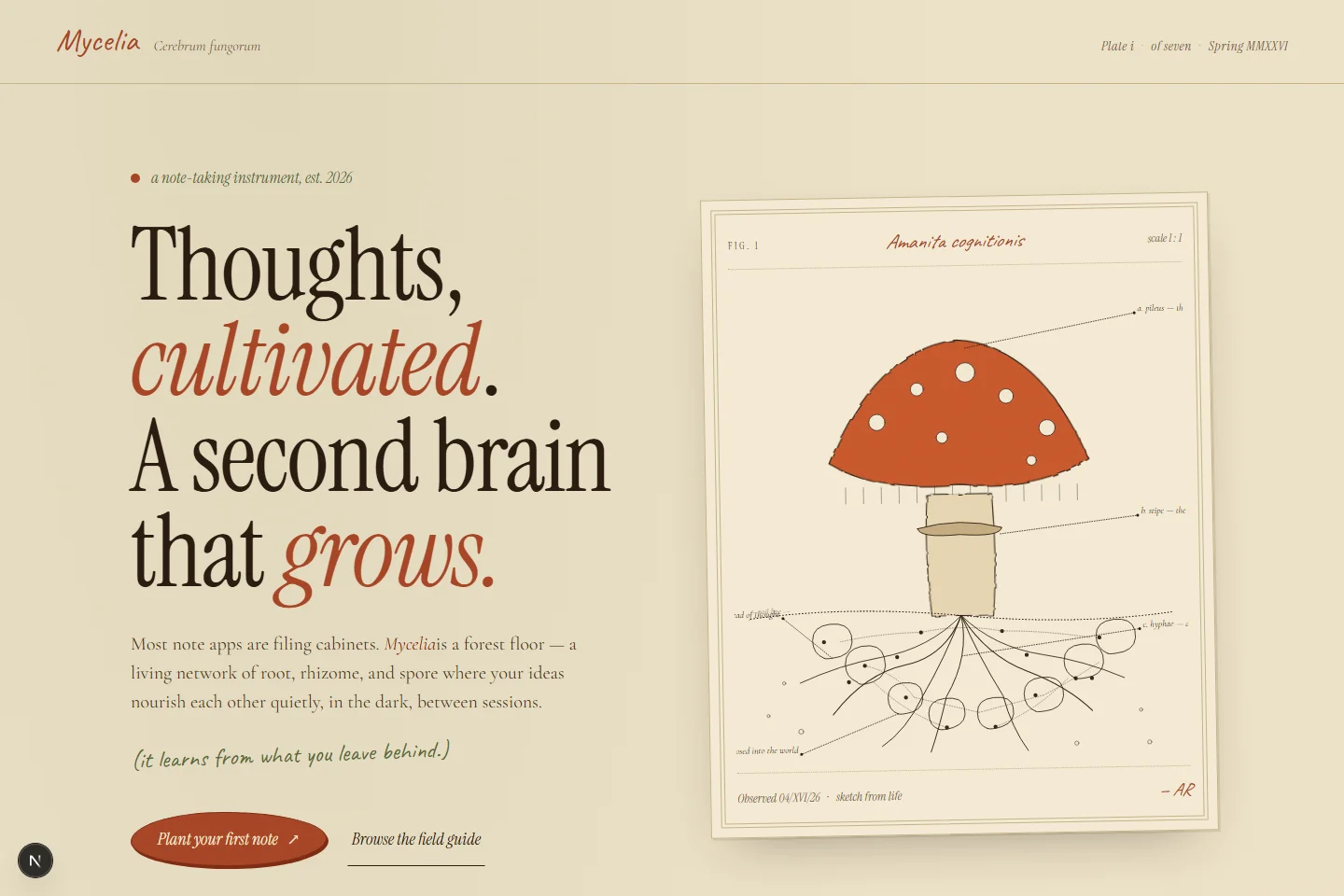

It’s not just “4.6 except the lowest generations got pulled up.” That undersells it. It’s quite a job: not only layout-wise, but how you take all these different things, customize assets, and put them together so they fit the style of what you’re building. I’m noticing a lot of intentional custom assets. Iteration two’s ASCII for each vault name, the type treatment, and the session live section all read deliberate. Page three on iteration three is the one that got me: a full mushroom illustration, and card icons that look like proper SVG marks instead of the random emoji some Opus runs lean on. Field notes in the right font, imagery that fits the site even when it’s a little silly. Iteration five does the same thing, where every illustration feels like someone actually styled this. Down in practice objects and invitation, the one, two, three kanji touches are just beautiful.